|

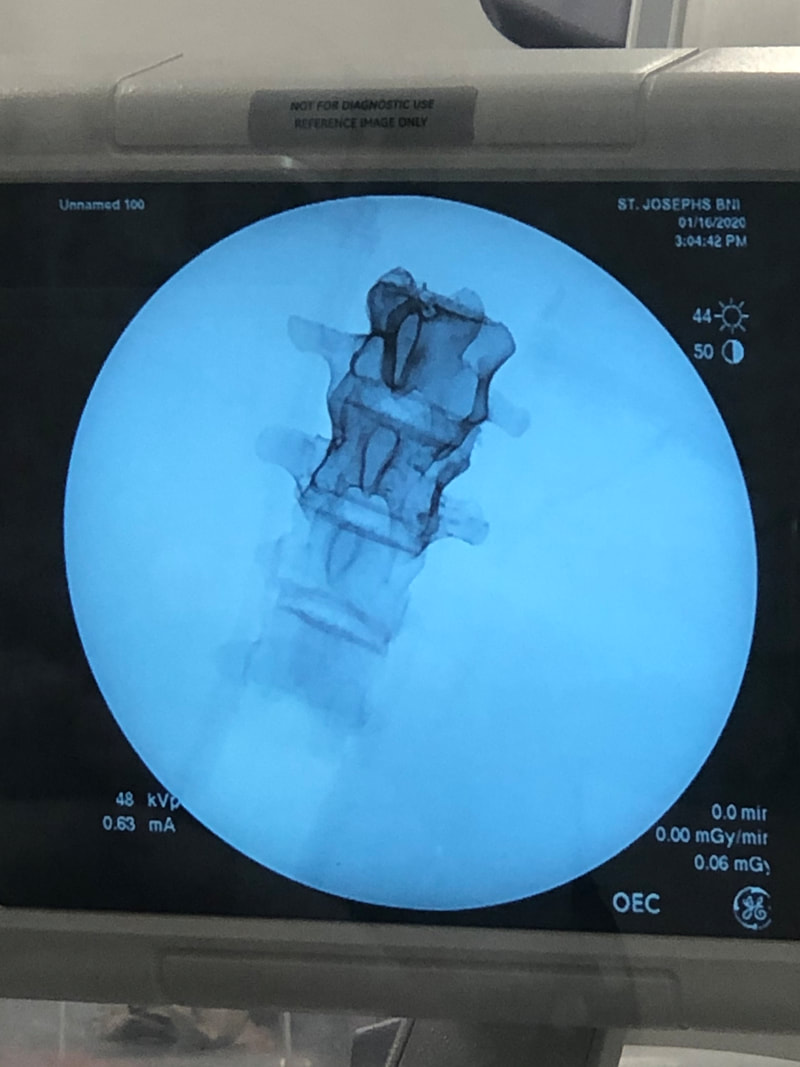

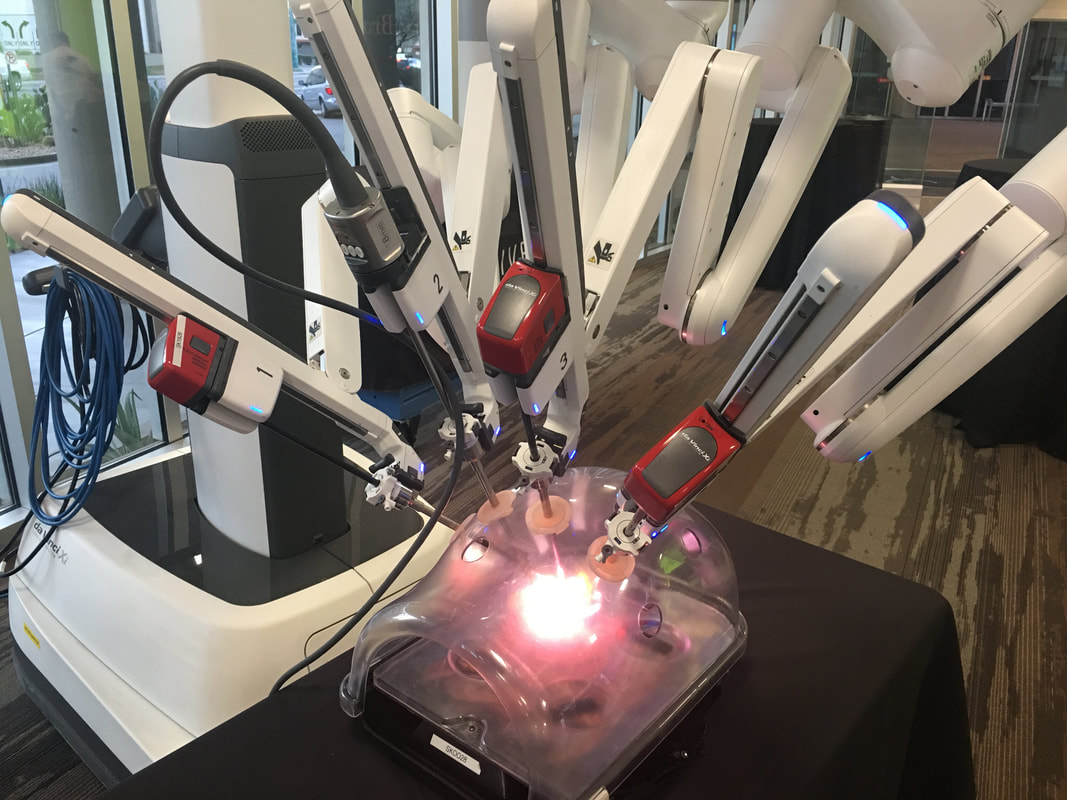

My prototyping adventures brought me to an unusual place today: the cadaver fridge. (I was unable to photograph the location.) I needed a large space in which to cool my prototype, so Dr. Bohl led me up to the giant fridge to place my model inside. When we took the model out later, it was cooled to my satisfaction, which allowed me to continue testing it. While we were upstairs, Dr. Bohl let me see another interesting project he is working on. As part of the super-realistic experience he seeks to provide users of Spine STUD models, he wants to incorporate x-ray functionality into the models. However, the material that the bone of the models is made of appears lighter than the muscle material on an x-ray, which is not realistic. For reference, here is what an actual x-ray looks like: It's easy to see that the cervical spine in the person's neck is visible much more sharply than the muscle. To provide a realistic experience, this is what Dr. Bohl seeks to replicate. In the lab, Dr. Bohl tried adding a certain material to the bone model, and he brought the model up to the x-ray machine to try out x-raying it. Unfortunately, he didn't have any synthetic muscle ready, but his addition of the material to the bone model significantly improved x-ray visibility, as can be seen in the picture below. The part of the spine with the special material added is extremely clear! The top part has the material added, while the bottom part doesn't. It's easy to see why the bottom part is hard to see inside a complete, life-like model. When synthetic muscle surrounds the top part of the spine, it should be quite easy to see the bone through the muscle. This will allow training surgeons to x-ray a model to generate imagery and determine details about the model's condition, which will allow training surgeons to perform a more precise surgery tailored to the "needs" of the specific model. This is precisely what real surgeons do with patients, so this model should ultimately lead to more effectively trained surgeons. Another very cool thing that I got to see today at a demo in the hospital was a surgery robot called the DaVinci robot. This is a robot that assists surgeons in performing minimally invasive surgery by transforming a surgeon's actions into much more subtle and precise movements. The surgeon sits at a control panel and operates the machine using both hands and an eyepiece that provides a 3D view of the surgical situation. Below is an image of the complete robot. It's a very complex robot, and it costs over $2 million! However, it offers fascinating functionality to surgeons. Below is a closer look at its arms. The surgeon can use foot pedals to switch between the arms during the surgery. Each arm is equipped with a grabbing mechanism and a light, which the surgeon can control from the control station below. Each arm is also equipped with electrocautery functionality, which is when an electrical current is sent through a conducting material to seal broken blood vessels. It's basically branding on a miniscule scale, and it's extremely helpful for surgery. The electrocautery is opperated with the top left pedal below. I got to try out the device on a physically attached model that allowed me to test the device's maneuverability, and it felt very intuitive! I looked through both eyepieces, which provided a 3D view of what I was doing. I used the two hand pieces that can be seen above to operate the arms, and I simply moved my hands around very naturally as if I were moving my hands around the landscape that I saw. To close the grabbers, I just closed my fingers, and I found it very easy to move the small rings around the pegs. In addition, the machine provided haptic feedback, so it felt almost like I was actually touching the landscape I was operating on. Overall, it was a very cool experience!

I did some more research on this device, and it has received some criticism for being a solution in search of a problem and not actually improving patient outcomes. Still, the device offers some very interesting options to further expand the field of neurosurgery, and one area I would be especially interested in looking into is the integration of AI and machine learning with this kind of system. I'm sure computer vision algorithms could be developed to perform some tasks with this machine, and a variety of AI techniques could likely also be used. AI could first be implemented in some very specified tasks, or even just to help surgeons avoid mistakes, understand anatomy, remember steps, or otherwise boost their capabilities. Of course, it will be a long time before people are ready to let machines operate on them with minimal or no supervision by a human surgeon, but it would be a fascinating area to explore!

0 Comments

Leave a Reply. |

Jeremy MahoneyI'm a high school student at Maumee Valley Country Day School, and I'm currently doing a neurosurgery-focused independent study at Barrow with Dr. Michael Bohl. ArchivesCategories |

RSS Feed

RSS Feed